From Open Source Software to Open Source Strategy

How the Smartest Executives Are Using Open Source Techniques to Optimize Corporate Strategy

Nearly 27 years ago, on July 12, 1999, an Above the Crowd post titled “The Rising Impact of Open Source“ opened with this statement: “Perhaps the most powerful movement in the software industry today is the continuing rise of ‘open-source’ development.” With more than a quarter century of hindsight, the success of the open source movement has exceeded even my own optimistic 1999 expectations. Founders have launched thousands of open source initiatives, producing hundreds of VC-backed companies and hundreds of billions of dollars in new market capitalization. The vast majority of new software development now happens on open source platforms.

But while “open source software” is a well-understood concept, a powerful new use of open source has emerged. Over the past fifteen years, a handful of leading business innovators have used open source concepts in an ultra-sophisticated way to solve critical strategic goals. I call this Open Source Strategy. These efforts are reshaping the power dynamics of entire industries and, once fully understood, are nothing short of sheer genius. If you operate in any industry that involves intellectual property and technology, and you do not fully understand this new landscape, you are exposed.

A Brief History

The modern open source movement traces back to three pioneers. Richard Stallman announced the GNU project on September 27, 1983, kicking off the “free software movement” out of the conviction that restricting source code was unethical. Linus Torvalds released the first Linux kernel in September 1991, frustrated by the limitations of MINIX and inspired in part by GNU’s tools (gcc, bash). He later attended a Stallman speech and was persuaded to release Linux under the GNU General Public License. Linux has since become the most widely distributed operating system in the world.

The third pioneer was Eric Raymond, who in 1997 presented an essay called The Cathedral and the Bazaar at the Linux Kongress in Würzburg, Germany. Raymond was the first to chronicle that software developed in a widely distributed, transparent way (the Bazaar model) was not just an effective way of building software — it was the very best way to build high-quality software. His central thesis: “given enough eyeballs, all bugs are shallow,” which he respectfully christened Linus’s Law. The Cathedral and the Bazaar is as critical to the history of software as Adam Smith’s Wealth of Nations is to economics. It unlocked the core reason broad open source approaches will always outperform proprietary closed “Cathedral” efforts: complex software gets better when problems are widely distributed across many minds. A remarkably prescient observation in 1997, and one that has only proven more true since.

Shortly after the essay, the movement adopted “open source” as the new name — a deliberate move away from the more zealous “free software” framing to make it more palatable to commercial endeavors. Just one year later, Red Hat, the leading commercial Linux distributor, IPO’d on NASDAQ. Open source was not only the very best way to build complex software — it was also a means to economic gain.

Benchmark, the firm where I worked as a General Partner for over 25 years, was an early investor in Red Hat and over that time invested in numerous other open source companies, including MySQL (acquired by Sun for $1B), SpringSource (acquired by VMware for $420mm), Hortonworks (now Cloudera), Elastic, and Confluent. The economic case for open source is now beyond debate. The list of major outcomes has only grown:

• IBM acquired Red Hat for $34 billion in 2019 — at the time, the largest software acquisition in history.

• Salesforce paid $6.5 billion for MuleSoft in 2018.

• IBM closed its $6.4 billion acquisition of HashiCorp in February 2025.

• IBM completed its $11 billion acquisition of Confluent in March 2026 — its third major open source bet in seven years.

• GitLab IPO’d in October 2021, joining MongoDB, Elastic, and Cloudera on the public markets.

IBM alone has now spent more than $50 billion acquiring open source companies. Open source is bigger than ever. The question is no longer whether the model works, but how widely it can be applied.

With three decades of hindsight, the five core reasons the open source software model is so powerful: leveraged development (more hands on deck, more corner cases explored), better testing and bug discovery (Linus’s Law again — open source is more vetted and more secure), more innovation (more parties trying more things), viral grass-roots distribution (which lowers the cost of distribution for any company built around an open project), and cost-savings for customers (no proprietary lock-in). As a result, founders have replicated the Red Hat model in entirely new categories. Let’s call this “classic” open source execution: find a need, kick-start a development initiative, and assuming it takes off, build a company around it to offer service, support, and value-added features.

Four Key New Open Source Developments

Before analyzing the new “Open Source Strategy” models, four developments from the past decade-plus are worth understanding. They have collectively transformed open source from a development methodology into a strategic platform — and they set the table for everything that follows.

1. The Increased Role of the Foundation

As the open source industry has matured, non-profit foundations like the Linux Foundation, the Apache Software Foundation, and the Cloud Native Computing Foundation have become critical to project management — and, importantly, they serve as a referee. That neutrality matters most when multiple competing companies are involved in the same project, which is the situation in nearly every modern Open Source Strategy play.

The Linux Foundation alone now hosts hundreds of projects across cloud, networking, AI, security, hardware, and automotive, with more than 1,000 member organizations. Its services span governance, legal frameworks, marketing, fundraising, interoperability, and training. As one of their internal slides puts it: “The LF Brings Partners Together to Create Ecosystems.” Open source projects today are not just GitHub repositories. The depth of foundation infrastructure is precisely what enables the level of cross-company collaboration that Open Source Strategy requires.

2. CIOs Shift to Open Source

When open source emerged 40 years ago, it was the playground of hackers and hippies. Today, it is the foundational layer of nearly every corporate IT stack. The era of being an “IBM shop” or an “Oracle shop” or a “Microsoft shop” — where one vendor’s proprietary system anchored the architecture and gave that vendor enormous pricing power — is over. The modern CIO is “open source first.”

The data backs this up. The Linux Foundation’s 2025 State of Global Open Source report found deep penetration across enterprise stacks: 55% of operating systems, 49% of cloud and container technologies, 46% of web and application development, 45% of databases, 45% of DevOps, and 40% of AI/ML workloads. The report describes open source as having “achieved mission-critical status.”

When CIOs are asked why, the answers all flow from Linus’s Law. The most-cited benefits in the same survey: improved productivity (86%), reduced vendor lock-in (84%), lower total cost of ownership (84%), faster innovation (82%), better software quality (79%), improved security (78%). Open source is no longer about t-shirts and sandals railing against the corporate machine. It is the machine.

3. The Rise of Amazon Web Services (AWS)

AWS is on a $142 billion annual revenue run rate as of Q4 2025, generated approximately $12.5 billion in operating income in that single quarter, and remains the clear market leader in cloud computing. So what does this have to do with open source?

If you went back to the year 2000 and asked who would lead cloud computing in 2025, most people would have predicted IBM, HP, or Intel — companies that owned IP around computing. They were wrong because the components underlying AWS had already been commoditized by open source. No IP-owning vendor could “hold up” Amazon with license fees or litigation threats. This is classic supplier power in Michael Porter’s Five Forces analysis — or more precisely, the complete lack of it. AWS is the cleanest demonstration of what happens when an entire stack gets commoditized: the operator wins, not the IP owner.

4. China Discovers and Embraces Open Source

Over the past several years, U.S.–China tensions have deepened — with sweeping U.S. export controls on advanced semiconductors, scrutiny of Chinese acquisitions, and broad debates over technology decoupling. One of the most consistent criticisms from the West has been the alleged theft of intellectual property. In that environment, China and its vast population of talented engineers continue to compete close behind engineers in the rest of the world.

Consider the brilliance of embracing open source if you are in China’s shoes. No one can accuse you of IP theft because the very tenet of open source is that no one controls the code. If it’s open, it’s open. There is no “theft” — just contribution and collaboration.

China’s tech giants figured this out early. Huawei joined the Linux Foundation as a Platinum member in 2016. Tencent followed as Platinum in 2018, with a board seat. Alibaba Cloud and Baidu joined as Gold members. By 2025, Chinese companies were the third-largest contributor base to CNCF projects.

But the bigger development is that the Chinese government has now formally embraced open source as national strategy. China’s 14th Five-Year Plan (2021–2025) was the first to enshrine open source in its planning documents. Five years later, China’s 15th Five-Year Plan (2026–2030), approved in March 2026, went further — explicitly committing to grow open source communities as part of national innovation strategy, with the 2026 Government Work Report calling for Chinese AI models to lead the global open source ecosystem. Two consecutive five-year plans now treat open source as a pillar of China’s technology agenda. That is not an accident.

The most striking validation came in January 2025, when DeepSeek released R1 — an open source LLM that briefly tanked U.S. AI stocks and forced a public reckoning with how fast Chinese AI capabilities had advanced. Other Chinese AI labs — Moonshot’s Kimi, Zhipu’s GLM, Alibaba’s Qwen — have followed suit. Meanwhile, leading U.S. AI labs have largely kept their frontier models closed.

The strategic logic is obvious: when you are the follower in a technology race, open source is your most powerful weapon. You commoditize the leader’s advantage, attract a global community of contributors, and sidestep every IP-related accusation in the process. China’s broad embrace of open source is a massive boon for the open source movement as a whole.

Open Source Strategy: How It Works

In the same 1999 blog post mentioned at the start of this post, I concluded with the following assertion:

Open source as a production model should be appreciated in the same light as Henry Ford’s assembly line or Deming’s Just-In-Time manufacturing process. By taking advantage of the electronic communication medium of the Internet as well as the distributed skills of its volunteers, the open-source community has uncovered a leveraged development methodology that is faster and produces more reliable code than traditional internal development. You can pan it, doubt it, or ignore it, but you are unlikely to stop it. Open source is here to stay.

Here is the mechanism I am calling Open Source Strategy. A small number of forward-thinking companies have learned to use open source as a deliberate corporate-strategy tool — to neutralize a stronger competitor, commoditize an expensive input, align an industry around a shared standard, or head off a regulatory crisis. Every one of these companies fully understands two things: (1) open source will always produce superior, more secure code than a closed alternative, and (2) if you have a reason to want an industry to coordinate around a single non-proprietary architecture, open source is the most powerful tool available to make that happen. The six examples that follow are the playbook in action.

The 1999 post included this comment: “At the end of the day, open source may prove to be more of a defensive weapon than an offensive one.” This is important to understand, because most Open Source Strategy plays are defensive at heart. You don’t need to beat your competitor outright to win. You can improve your strategic position by thwarting a threat, building a moat, or removing a competitor’s pricing power over you. As you’ll see in the examples, defense is usually the goal — and the offensive payoff often comes later, almost as a byproduct.

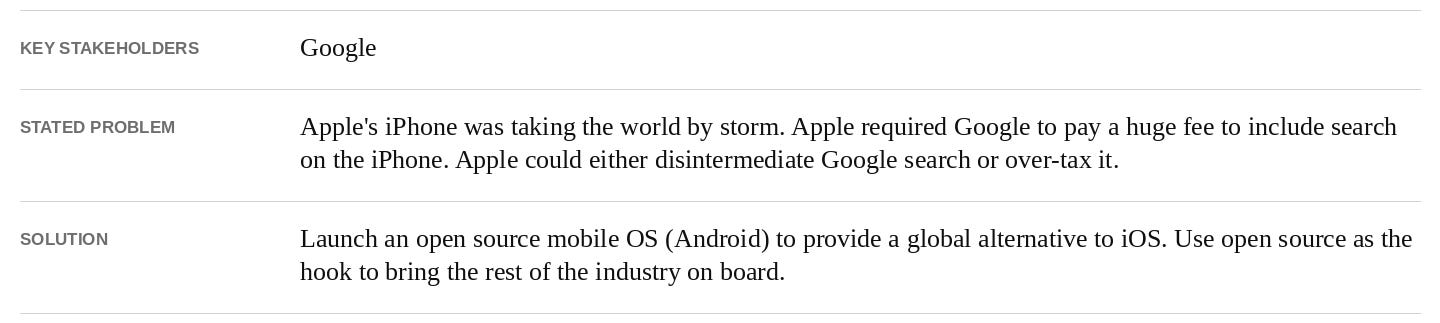

Example 1: Android (2007)

Google’s launch of Android as an open source alternative to Apple’s iOS is the most well-known and successful strategic use of open source. But that understates the accomplishment. Google’s use of open source to counter what could have been Apple’s outright dominance in mobile handsets is one of the most cunning, bold, and value-creating (or value-protecting) business achievements of all time. They both headed off a massive potential threat from the iPhone and built a massive new business around the Google Play Store.

It is hard to put ourselves back in 2008 when Apple’s iPhone was exploding. At the time, Apple supported a single carrier in AT&T. Why only one? Carriers were used to controlling and dictating the user interface of phones on their networks. Apple needed a partner that would cave on this point, and they found one. I can only imagine Apple’s bet was: “if we make this wildly successful, the others will be forced to play by our rules as well.” That early success — with AT&T as the sole carrier and Apple holding all the cards — sent everyone in the ecosystem into a panic. Carriers, handset manufacturers, and component providers all wondered the same thing: what if Apple ends up with a Wintel-like monopoly in mobile?

It was that anxiety that opened the door for Google’s brilliant open source strategy around Android. Using “open” rhetoric as a reason everyone should trust Google, the company convinced nearly the entire rest of the industry to get behind a less-closed answer to Apple. The group was organized through the Open Handset Alliance, announced on November 5, 2007 with support from HTC, Motorola, Samsung, Sprint, T-Mobile, Qualcomm, Texas Instruments, and of course Google. The shared belief: anything is better than Apple controlling the whole industry. The “open” part gave them hope that no single company would control Android. That hope turned out to be misplaced.

Over the past nearly two decades, Google has unquestionably crushed it on Android execution. Android is the clear leader in global handset operating system share at roughly 73%, powering an estimated 3.9 billion active devices. On top of that, Google was able to “recapture” control of Android despite the project’s original positioning as truly open. This was accomplished primarily by tying access to other key Google products — Search, Maps, Gmail, YouTube, the Play Store — to a “Google-approved” version of Android. If you don’t ship the official version, you don’t get those critical apps. Notably, the Open Handset Alliance never used a third-party referee like the Linux Foundation to govern the project, which made it much easier for Google to maintain control over Android’s evolution.

As mentioned earlier, sometimes companies use open source primarily to play defense rather than offense. As I discussed in depth in my 2011 post, The Freight Train That is Android, Google’s search business stood to be potentially disrupted if Apple controlled the majority of mobile handsets. Apple required Google to pay a hefty fee to be the default search engine in Safari, much like the fee Google pays to Mozilla. Wall Street was starting to notice and care. Android offered massive relief from this potential toll-taker standing between the customer and Google’s search product. That is why Android was so, so valuable to Google. It erected a mile-wide moat around the search business — and this is before Android generated any direct revenue (which it eventually did in spades). Bigger moats lead to higher valuation multiples, as investors can rest assured cash flows will keep coming for years.

The biggest non-Google beneficiary of Android’s open source origination was China. The Chinese internet ecosystem was effectively gifted a full-featured mobile OS, and the market there played out the way handset vendors and carriers had probably hoped the U.S. market would. There are many companies competing with different versions of Android, multiple “Play Store” equivalents, and — with Google’s other properties unavailable in China — none of the tying strategy was on the table. Perhaps this open source gift is part of what opened China’s eyes to the broader benefits of embracing open source as national strategy.

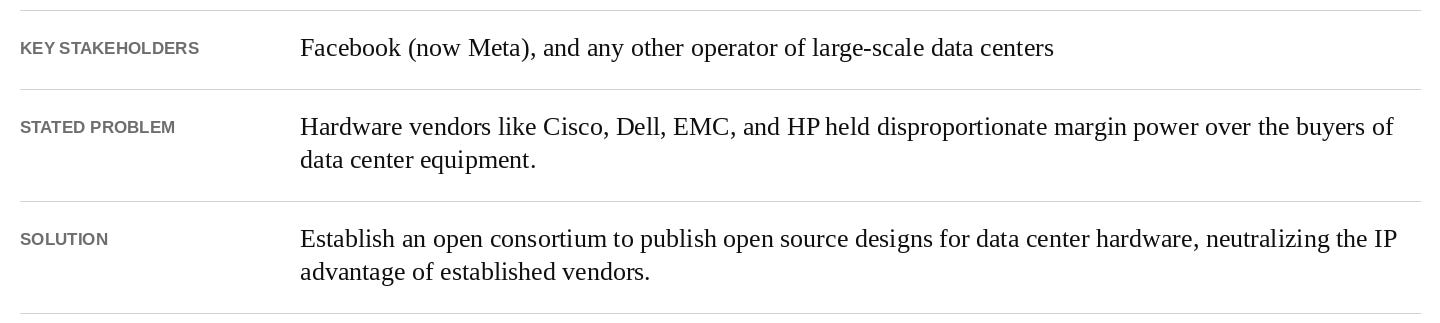

Example 2: Open Compute Project (2011)

In April 2011, Facebook (now Meta) launched the Open Compute Project (OCP) along with founding partners Intel, Goldman Sachs, Rackspace, and Andy Bechtolsheim. The premise was straightforward: there was no good reason for hyperscale data center operators to keep paying premium prices to traditional hardware vendors when they could collaborate on open hardware designs. Facebook had already done much of the design work in-house — they simply contributed it to a foundation and invited the world to participate.

OCP has been an enormous success. By 2025, the foundation had more than 400 member organizations including AWS, Microsoft, Google, Meta, Apple, Cisco, Dell, HPE, Intel, AMD, Nvidia, and essentially every major player in the data center supply chain. Industry analysts at Omdia estimate that OCP-recognized infrastructure spending will reach approximately $132 billion in 2025 and grow to $295 billion by 2029. That is a large slice of global IT capex now flowing through open hardware specifications.

Meta’s capex tells the underlying story. The company spent approximately $72 billion on capex in 2025 and has guided to $115–135 billion for 2026, the vast majority of which goes to data center infrastructure. Without OCP and the commoditization it has driven, Meta would be paying significantly more — and would be far more exposed to a small number of vendors with pricing power. Instead, Meta gets best-of-breed open designs, multiple commodity manufacturers competing for its business, and the benefit of every other hyperscaler’s contributions to the same designs.

OCP is a textbook Open Source Strategy play. Meta did not need to build a new business to monetize OCP — they just needed to eliminate the supplier power of the hardware vendors. That is enormously valuable: every dollar that doesn’t go to vendor margin flows to Meta’s bottom line or accelerates its infrastructure investment. And because OCP is hosted under a neutral foundation, no single member can recapture the project the way Google recaptured Android.

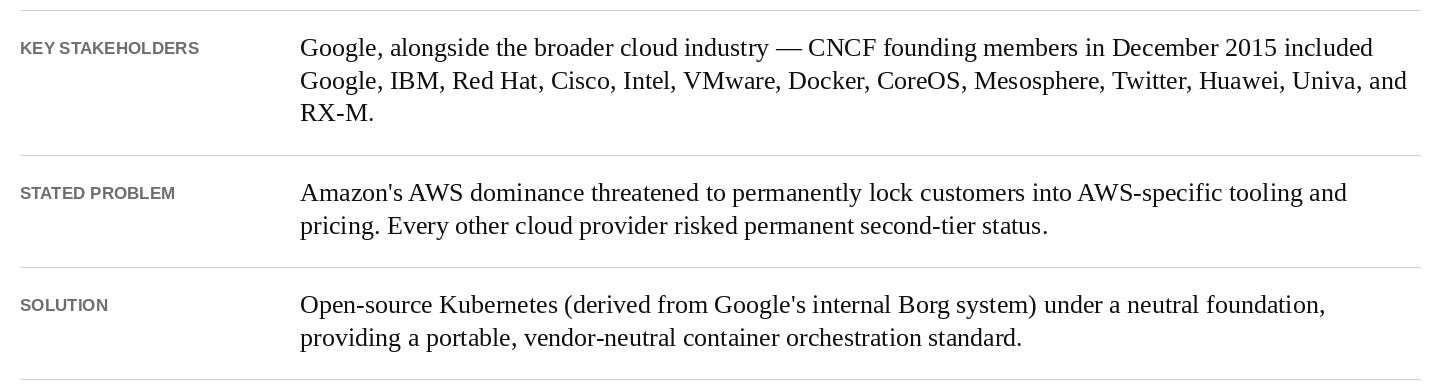

Example 3: Kubernetes (2014)

In June 2014, Google open-sourced Kubernetes — a container orchestration system derived from its internal Borg system, which Google had used for years to run its own services at planetary scale. The following year, Google donated Kubernetes to the newly-formed Cloud Native Computing Foundation (CNCF), which became an arm of the Linux Foundation. The strategic reasoning was as transparent as it was brilliant.

Amazon Web Services was running away with the cloud market. AWS’s margins were enormous, its customer lock-in was real, and every customer that built deeply on AWS-specific services became progressively harder to migrate. Google Cloud was a distant third. Microsoft Azure was a distant second. The entire industry had a problem — and that problem had a name. Google’s answer was a now-familiar move: take a piece of valuable internal IP, give it away under a neutral foundation, and watch the rest of the industry rally around it as the standard.

It worked. Kubernetes became the de facto standard for container orchestration with remarkable speed. By 2025, the CNCF had more than 800 member organizations. Independent surveys found that 82% of organizations are running Kubernetes in production, and 66% of those running generative AI workloads in production are doing so on Kubernetes. The CNCF launched an AI Conformance Program in November 2025, ensuring Kubernetes remains the standard substrate for AI infrastructure as well. Notably, AWS — the very company Kubernetes was designed to neutralize — is now a Platinum-tier contributor to the project. AWS had to support Kubernetes because its customers demanded it. That is exactly what the Open Source Strategy playbook is designed to accomplish.

The Kubernetes story is now the playbook running for the third time. Google used open source to neutralize Apple’s mobile dominance with Android. Google used open source to neutralize Amazon’s cloud dominance with Kubernetes. And Google is now positioned to use open source to shape the AI infrastructure layer — whether through Kubernetes itself, through TensorFlow, JAX, or other contributions. There is a pattern here, and it is not subtle. Google has internalized Open Source Strategy at a deeper level than any other company in the world.

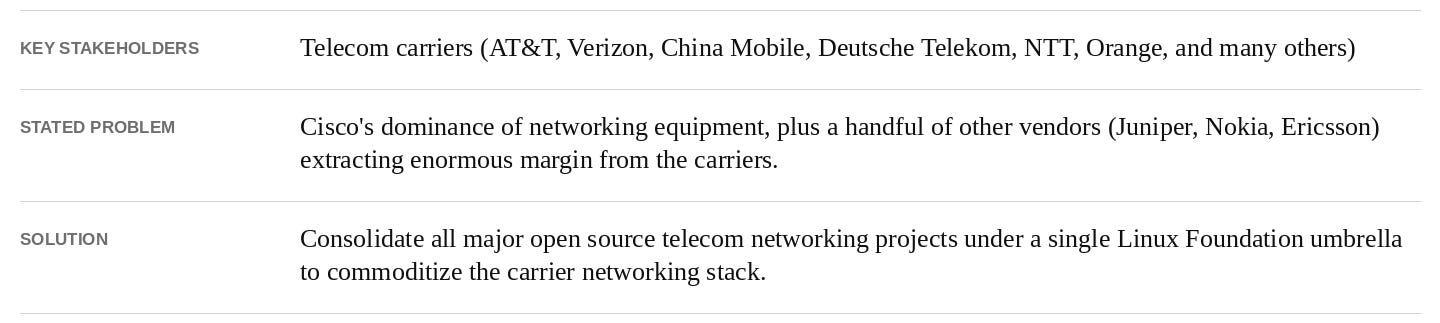

Example 4: LF Networking (2017)

In January 2018, the Linux Foundation officially launched LF Networking by combining several existing open source telecom networking projects — including ONAP, OPNFV, OpenDaylight, FD.io, PNDA, and SNAS — into a single umbrella organization. The strategic motivation was straightforward and familiar. Telecom carriers had been paying enormous margins to a small number of equipment vendors for decades. Cisco alone had gross margins north of 60% on its networking business. Juniper, Nokia, and Ericsson all enjoyed similar pricing power. The carriers — who collectively spend hundreds of billions of dollars per year on network equipment — wanted to commoditize the layer.

LF Networking has grown to more than 100 members including AT&T, Verizon, China Mobile, China Telecom, Deutsche Telekom, NTT, Orange, Vodafone, Bell Canada, Reliance Jio, Telstra, Comcast, and most other major carriers. Vendor members include Cisco, Juniper, Nokia, Ericsson, Huawei, Red Hat, VMware, Intel, Samsung, and many others. The Linux Foundation estimates that LF Networking projects help power approximately 70% of the world’s mobile subscribers. New initiatives have continued to expand the scope — O-RAN for open radio access network architecture, Nephio for cloud-native network automation, and Duranta for AI-driven network operations. A 2024 LF survey found that 92% of telecom organizations now prioritize open source as a strategic input.

Has it worked? The honest answer is mixed. Cisco’s gross margins remain high — around 65–68% in fiscal 2025 — and the AI infrastructure tailwind has actually boosted Cisco’s near-term position. But look at the broader vendor landscape and the picture is far more telling. Juniper was acquired by HPE for $14 billion in July 2025 — a clear sign that the standalone networking equipment business model was no longer attractive enough to justify independent existence. Nokia’s mobile networks revenue declined 21% in 2024. Ericsson cut more than 25,000 jobs across 2023–2024. The global telecom equipment market shrank 11% in 2024 — its steepest annual decline in over 20 years.

The carriers haven’t fully commoditized Cisco the way Meta commoditized hardware vendors with OCP. But they have collectively created a much harsher operating environment for the entire telecom equipment industry. New initiatives like Open RAN are progressively eating into the proprietary radio access network business that has historically been dominated by Ericsson, Nokia, and Huawei. The strategic logic is the same as in every other example: erode supplier power, prevent any single vendor from controlling the architecture, and reclaim margin. The execution takes longer in telecom than in other industries because of the complexity of regulatory environments and the long lifecycle of installed equipment, but the trajectory is unmistakable.

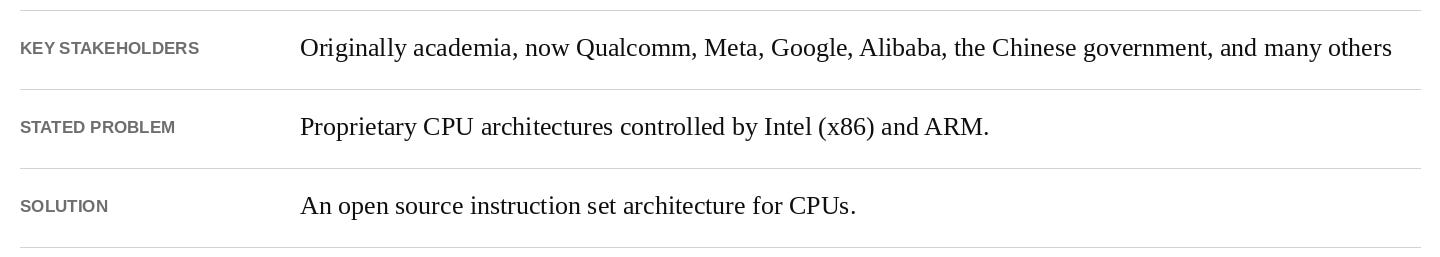

Example 5: RISC-V (2010)

The open source project known as RISC-V is a bit different from the first four examples. Its roots are much closer to Richard Stallman’s free software movement. A group of academicians led by David Patterson at UC Berkeley had a crazy idea: if you can build and design open source software, why can’t you build and design open source CPUs?

I had a chance to ask David Patterson about his original motivation, and the response was quite consistent with other open source pioneers:

Berkeley culture is to make everything open source, and we wanted other researchers to use our ideas. Berkeley values impact, not just papers, so making it available to everyone increases chances of impact, like Berkeley UNIX or the Berkeley Spice CAD program.

I asked him if he ever imagined it would eventually impact the corporate world:

Depends when. In 2010, Berkeley folk were doing it for other academic researchers to use. No idea then. In 2014, when outsiders started using it because they wanted an open architecture, it was easy to believe that it could take off, since it made a lot of sense both technically and economically. I think our slides even back then said that our goal was world domination, which was only partly tongue-in-cheek.

RISC-V has gone from interesting alternative to the most consequential semiconductor story of the decade, with massive geopolitical implications. The two largest CPU vendors, Intel and ARM, both charge per-unit fees to use their architectures, and ARM also charges an upfront fee to design around their architecture. It’s easy to understand why others might favor a non-proprietary, open alternative — and the corporate movement has been dramatic.

Consider what’s happened in just the last several years:

• RISC-V International membership has grown to more than 4,600 organizations across 70 countries.

• Qualcomm acquired Ventana Micro Systems in 2025 for $2.4 billion to accelerate its RISC-V CPU roadmap, alongside its existing ARM-based Oryon line. Qualcomm has shipped roughly 650 million RISC-V cores already.

• Meta acquired Rivos in 2025 to bring RISC-V silicon design fully in-house for AI workloads.

• Major adopters now include Broadcom, Google, MediaTek, Renesas, and Samsung, joining earlier commitments from Western Digital and Nvidia.

• By the end of 2025, industry analyst SHD Group estimated RISC-V had reached roughly 25% market penetration in silicon — with an estimated 20 billion cores in operation worldwide.

Now consider the geopolitical dimension. Earlier in this post I described how China embraced open source as a strategic answer to U.S. accusations of IP theft. Nowhere is that thesis playing out more vividly than in RISC-V. With U.S. export controls cutting off China’s access to advanced semiconductors, China has elevated RISC-V from a useful alternative to a national priority:

• In March 2025, eight Chinese government bodies — including MIIT and the Cyberspace Administration of China — released a comprehensive policy framework mandating RISC-V integration into critical infrastructure including energy, finance, and telecom.

• Alibaba’s T-Head chip subsidiary has shipped roughly 2.5 billion RISC-V cores across its XuanTie series, including the server-grade C930 (2025) and C950 (2026), with the C950’s CPU core reported to be the most powerful of its kind globally at launch.

• The Chinese Academy of Sciences released the open source XiangShan (”Kunminghu”) high-performance RISC-V design and reportedly modified it to run DeepSeek-R1.

• The U.S. response was telling. Beginning in 2023, House committee leaders — including the Foreign Affairs and Select Committee on the CCP — have publicly pushed the Commerce Department to restrict U.S. participation in RISC-V development if those contributions could benefit “adversary” nations. Multiple legislative proposals have followed. The fact that members of Congress are openly calling for export controls on an open source instruction set is itself remarkable.

When the U.S. government starts trying to restrict open source standards, you know that open source has become strategically consequential. RISC-V is the architectural ground floor for what could be a fully decoupled Chinese compute stack — and there is essentially nothing the U.S. can do to take that loophole away, because RISC-V International is governed in Switzerland and the instruction set itself is open. As one analyst put it, RISC-V is “sanction-proof.”

What about ARM, the obvious incumbent at risk? After Nvidia’s $40B–$66B acquisition attempt collapsed in early 2022 under regulatory pressure, ARM IPO’d on the Nasdaq in September 2023 at a $54.5 billion valuation. Today its market cap sits around $150 billion, riding the AI tailwind. ARM has done well in the near term — but the structural threat from RISC-V has only grown.

Here’s the most telling detail: in ARM’s own SEC filings, RISC-V is flagged explicitly as a competitive risk. ARM’s annual report acknowledges that “our customers may choose to utilize this free, open-source architecture instead of our products.” The same filing notes that many of ARM’s customers are also major supporters of RISC-V — which is exactly what an Open Source Strategy play looks like from the inside. The companies paying ARM for licenses are simultaneously funding the open source alternative designed to commoditize ARM. Especially as China is essentially compelled to migrate off ARM for sovereign computing, ARM’s near-term success and RISC-V’s long-term threat are both real.

Perhaps the most thoughtful early writing on RISC-V came from public investor group NZS Capital, whose July 2019 piece Open Source Semiconductors presciently noted that the end of Moore’s law and the move toward complex multi-chip packaging would favor open source — because complexity favors open source. Linus’s Law applies to silicon, too. What we are watching with RISC-V is exactly that thesis playing out at planetary scale.

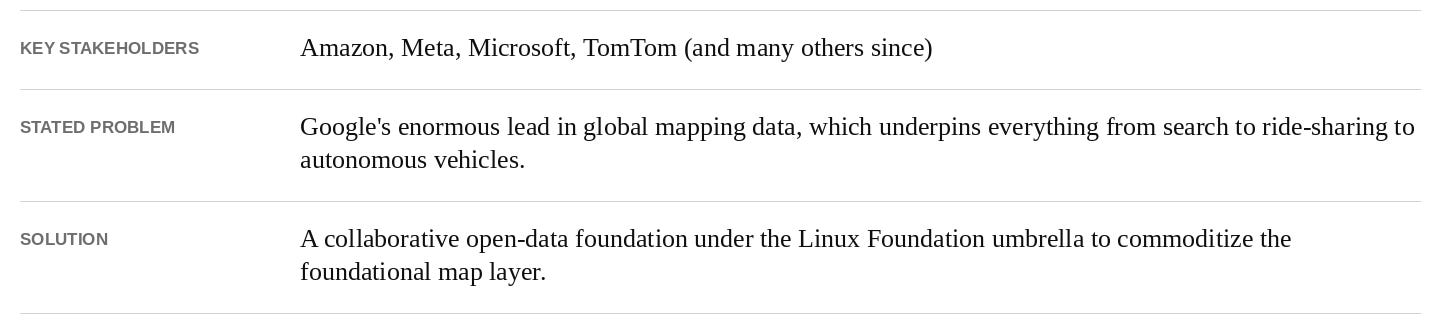

Example 6: Overture Maps Foundation (2022)

The first five examples are now well-established Open Source Strategy plays. The sixth is newer, but it is one of the cleanest examples of the playbook in action — and it deserves a place on the list because it directly sets up the autonomous vehicle discussion that follows.

The problem these companies faced is straightforward. Google has spent more than a decade and untold billions of dollars building the most comprehensive map of the world. That map underpins Google Search, Google Maps, Waze, Android Auto, Waymo, and a long list of ad-supported and subscription products. For every other large technology company that depends on geospatial data — from Amazon’s logistics network to Meta’s social graph to Microsoft’s enterprise products — Google has a structural advantage that compounds every year. And the only economically rational alternative — licensing map data — leaves you dependent on a small number of commercial map providers like TomTom, HERE, and Foursquare.

In December 2022, Amazon Web Services, Meta, Microsoft, and TomTom did exactly what we have seen in every prior example: they joined forces under the Linux Foundation umbrella to create an open, neutral alternative. The Overture Maps Foundation was born with a stated mission of producing reliable, easy-to-use, and interoperable open map data. Rather than relying on a single source, Overture combines OpenStreetMap data with hundreds of other open datasets, adds quality and consistency layers, and combines it with member contributions to create a production-grade base map.

Look at the Open Source Strategy fingerprints:

• A neutral referee: Overture is hosted under the Linux Foundation as a Joint Development Foundation Project, with formal governance and IP frameworks. (Compare to Android, where the lack of a referee allowed Google to “recapture” the project.)

• A common adversary: Every founding member had a strategic interest in commoditizing Google’s map moat without having to build a global mapping operation themselves.

• A repeatable playbook: Just as the Open Compute Project commoditized data center hardware for Meta and the Cloud Native Computing Foundation commoditized cloud infrastructure for everyone-not-named-Amazon, Overture commoditizes the foundational map layer for everyone-not-named-Google.

• A widening tent: Membership has grown to roughly 45 organizations including Esri, Uber, TomTom, and many others — spanning hyperscalers, automotive companies, mapping vendors, and platform players. Each new member has the same basic logic: contributing to the shared base map is cheaper and more strategic than building a global mapping operation alone.

The progress has been faster than typical for a project of this scope. Overture has shipped open map data on a roughly monthly cadence for nearly two years, hit production-ready 1.0 in 2024, and released its Global Entity Reference System (GERS) to general availability in 2025. GERS is exactly the sort of plumbing that is easy to underestimate but enormously valuable — it provides a universal “fingerprint” for physical places (a building, a street, a point of interest) that lets any organization merge its proprietary data with the shared open base map without expensive custom matching work. Overture’s executive director has described the goal as ending the geospatial industry’s “conflation tax” — the longstanding reality that organizations spend more cleaning and matching map data than they do licensing it. Adoption has been broad. Meta has migrated Facebook and Instagram global basemaps to Overture. Microsoft is using Overture data in Bing Maps and Azure Maps. TomTom has built its Orbit platform on Overture, which now serves their automotive customers. Uber is using Overture. Esri’s ArcGIS uses Overture for its Open Basemap. The pattern is clear: the production users are showing up, and they are not small.

The most interesting development is what Overture might mean for AI. As large language models and AI agents increasingly need to ground their outputs in real-world geospatial information — where is this restaurant, what’s nearby, can a vehicle actually drive this route, is this place good for a business lunch — the question of which map underlies that grounding becomes strategically critical. The questions AI is being asked about places are also getting much broader. It used to be enough to know operating hours and address. Now users want subjective, context-dependent judgments — and every additional layer of meaning that gets attached to a place needs to attach to a stable, validated, accurately-grounded reference for that place. That is exactly what GERS provides. If every AI system grounds itself in Google’s proprietary map, Google holds a quiet but enormous lever over the entire AI ecosystem. If they ground themselves in a shared open data set, no single company holds that lever. Overture is now actively pushing the case for AI systems to align around a common, open, well-grounded geospatial layer — and while the agendas are competing and the outcome is not yet settled, the strategic stakes are obvious.

This is an Open Source Strategy play that should sound familiar by now: identify a critical layer where one incumbent has a structural lead, rally the also-rans under a neutral foundation, and turn the layer into a commodity that no single company controls. With each successful example, the playbook gets stronger and more obvious — which is exactly the point. Which brings us to autonomous vehicles.

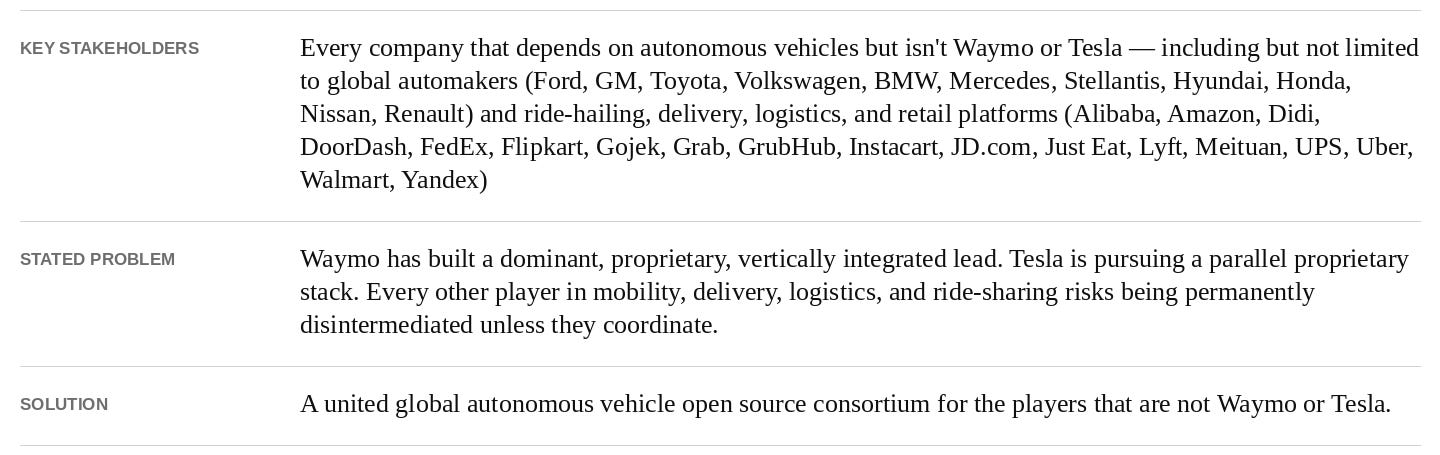

Two Live Real-World Examples

Six historical examples is enough to establish the pattern. But Open Source Strategy is not history. It is happening right now, in two of the most consequential technology arenas of the next decade: autonomous vehicles and artificial intelligence. Both are live. Both are unresolved. Both will turn on the same question: whether the leading proprietary players succeed in keeping their stacks closed, or whether a coalition of followers — corporate, geopolitical, or both — runs the open playbook against them. The two cases are at different stages, with different incumbents and different stakes. But the strategic logic is identical, and the two outcomes will tell us a great deal about how technology competition works for the next twenty years.

Open Source and Autonomous Vehicles

There has never been a technology with a more appropriate fit for a global Open Source Strategy than autonomous vehicles. If you have read this far, the case should feel almost tautological. The question I want to address in this section is not whether an open source AV consortium will inevitably emerge — that is a prediction, and predictions about industry coordination are notoriously hard. The question is what the fifty-plus companies whose business depends on autonomous vehicles but who are not Waymo or Tesla should do. And on that question, I think the answer is now both clear and urgent. Two factors drive it. First, open source will produce a better solution than any single Cathedral effort — one that arrives sooner, is critically safer, more secure, and delivered at a lower cost. Second, there is a long list of companies, with combined market capitalization in the trillions of dollars, whose strategic position is at risk. Ensuring that no single company emerges with proprietary control over the technology they will all eventually depend on is in their collective self-interest — and the longer they wait, the harder that position is to recover.

Why an Open AV Solution Is the Right Answer

A global, collaborative, open source platform for autonomous vehicles would produce a far better outcome for society. Here’s why:

1. Better. Linus’s Law is clearly in play. With many eyes on a single shared platform, all the bugs are indeed shallow. Testing and bug discovery will be spread across hundreds of companies and thousands of cities. Academia will participate. Specialists will hone in on their areas of focus. Every vehicle will have the opportunity to communicate and share data with every other vehicle, since they all conform to the same standard. Compare this to the alternative — several siloed “Cathedral” offerings operating independently. Those programs will have a small fraction of the test history and vetting of an open shared platform, perhaps by orders of magnitude.

2. Safer. No single feature of an autonomous vehicle matters more than safety. Open source will deliver a safer car for the same reason it delivers a better one — many eyes finding edge cases, more diverse testing environments, faster propagation of fixes. The bizarre asymmetry today is that each major proprietary AV program is rediscovering the same hard problems independently. A bus stopping with flashing lights. A child running into a street. An ambulance with its siren on. Each company is building its own response to each scenario and keeping it private. Imagine instead if every fix to every edge case were immediately available to every vehicle, everywhere. That’s how aviation safety works today, and AV safety should work the same way.

3. More secure. People probably are not focused on AV security yet, but you cannot have safety without it. A hacker taking control of a single vehicle, let alone a fleet, could produce massive fatalities. We already know open source software produces more secure software than proprietary alternatives — Linus’s Law again — and as Linux has demonstrated for decades, an operating system maintained by thousands of contributors with public scrutiny tends to be substantially more hardened than its closed-source equivalents. The same logic applies to autonomous vehicles.

4. Sooner. Autonomous vehicles are way behind the original prognosis from leading vendors. A single shared development effort delivers more total engineering hours per dollar of capex, a richer training-data pool from broader operating environments, and a more comprehensive shared test suite than any single company can build alone. Distributing the workload and sharing all the learning is precisely how the bazaar outpaces the cathedral.

5. Lowest possible price points. Today’s leading AV programs operate well above the cost level required to compete economically with human drivers. Every company that aims to benefit from AV technology benefits even more if the technology is cheaper. A single collaborative open source effort will lead to the lowest possible cost solution. We know this. It’s the same logic already proven by the Open Compute Project, LF Networking, and RISC-V. There is no way a single Cathedral vendor can compete cost-effectively with open source over the long run.

6. Better for government oversight. Governments around the world will demand a role in regulating autonomous vehicles. With an open source solution, both compliance and reporting can be built into the base platform, which makes it dramatically simpler for regulators to do their jobs. Imagine the alternative — overseeing fifteen different proprietary architectures from fifteen different companies, each of which considers its inner workings a trade secret. That’s a regulatory nightmare. An open shared base layer is a regulatory dream.

7. More innovation. With a shared platform, more companies, inventors, and academics get the chance to innovate in ways that expand the platform faster. That could be a focused technology area like LiDAR, vehicle control, or perception. It could be expanding the platform to a new use case like a sidewalk delivery robot, a drone, or an industrial vehicle. It could be the development of a shared test suite or interoperability layer. The point is that you don’t have to work for the one Cathedral vendor that might win in order to contribute. A shared open source strategy lets thousands of entrepreneurs and engineers innovate where they care most.

Why the Strategic Logic Is Compelling

The 2026 reality is much starker than the 2022 picture. Waymo is the runaway leader. Combined Alphabet and outside capital invested in Waymo since inception now exceeds $45 billion, including a February 2026 round that valued the company at $126 billion. Waymo operates commercial robotaxi service in 10 U.S. cities, is preparing to launch in another 10+ in 2026 plus London and Tokyo, and is targeting more than one million driverless rides per week by year-end. Tesla is pursuing a parallel proprietary stack with its Cybercab and FSD-based service, betting on a vision-only approach and the world’s largest installed base of consumer vehicles — and Tesla might also broadly license its FSD technology to other automakers, which would further entrench a proprietary stack as the industry default. The runners-up have collapsed. Argo AI (Ford and Volkswagen) shut down in 2022. GM ended Cruise in December 2024 after spending more than $10 billion, citing the “increasingly competitive robotaxi market.” Zoox is still mostly in testing.

Two leaders. Two proprietary stacks. One is part of the most powerful technology company in the world. The other is run by the most valuable car company in the world. And the runners-up have either died or remain orders of magnitude behind. This is precisely the market structure where Open Source Strategy thrives.

Now consider the long list of companies who depend on autonomous-vehicle technology but are not Waymo or Tesla. Every global automaker — Ford, GM, Toyota, Volkswagen, BMW, Mercedes, Stellantis, Hyundai, Honda, Nissan, Renault — and all their tier-one suppliers. Every ride-hailing company — Uber, Lyft, Didi, Grab, Bolt, Yandex, Ola. Every delivery and logistics platform — Amazon, FedEx, UPS, DoorDash, Instacart, Just Eat, Meituan, DPD, Deliveroo. Every retailer with a logistics arm — Walmart, Target, Alibaba, JD.com. Add in the cities, transit authorities, fleet operators, and academic institutions, and you have well over fifty large entities, with combined market capitalization in the trillions of dollars, all of whom face the same strategic question.

Imagine this as a multi-player prisoner’s dilemma. Choice A: try to win the AV race outright, against Waymo and Tesla. Spend tens of billions of dollars over a decade with very low odds of success. Choice B: support a global open standard that ensures no party emerges as the Microsoft of autonomy. Capital required is a fraction of Choice A, and you maintain the status quo with respect to your historic competitive position. With fifty-plus players sitting at this table, the math is brutal: most of them cannot win Choice A. The only question is when they admit it and act accordingly.

The capital math has only gotten more punishing. Cruise spent $10 billion and didn’t make it. Argo went to zero. Ford and VW absorbed billions in losses. Even Waymo, which is by every measure the leader, is still consuming over $1 billion per quarter at the Alphabet “Other Bets” segment. If Waymo needed more than $45 billion in combined Alphabet and outside capital to get to where it is today, what does it cost Toyota or Stellantis to catch up? The Cathedral path is closed for almost everyone. Choice B is the only economically rational move for the rest of the industry.

Eyes Towards China

There are many obvious reasons to pay attention to how AVs are evolving in China.

First, China is the runaway global leader in EVs. Not by a little. By a lot. BYD overtook Tesla in 2025 to become the world’s largest EV seller. Chinese automakers now account for roughly 70% of global EV production, with vertical integration and supply-chain depth that Western competitors cannot match. Chinese brands now own 85% of EV sales in markets like Brazil and Thailand. Western automakers see exactly where this is going — Ford announced a $19.5 billion writedown on its EV strategy in December 2025 — but they cannot get to the price points Chinese manufacturers have already hit. Cheap, well-built EVs are flying off the lot. They just happen to be made in China. And this matters directly for AVs. The base vehicle is the largest single cost in any robotaxi. Even Waymo had to turn to China. Why? Cost. Waymo’s sixth-generation Ojai robotaxi is built on Geely’s Zeekr platform — assembled in Arizona, but with a Chinese-built base vehicle underneath. The arrangement is messy and getting messier — tariffs on Chinese EVs, IP and connected-vehicle concerns from U.S. regulators, and a 2025 Commerce Department rule that will further restrict Chinese AV components in the years ahead. But the underlying point survives all of it: even the Western leader could not ignore the cost advantage.

Second, China has many AV participants — not one or two. Baidu’s Apollo Go has logged over 240 million autonomous kilometers across more than 20 cities, with over 17 million paid rides. Pony.ai is the only company in China offering fully autonomous robotaxis across all four tier-one cities, with global expansion to Dubai, Singapore, South Korea, and Luxembourg. WeRide operates in 11 countries with partnerships including Grab, Uber, and ComfortDelGro. Add AutoX, Didi Chuxing, DeepRoute.ai, and Momenta. China has at least six well-funded AV companies competing simultaneously, with manufacturing partnerships across most of the country’s automakers. This is the structural opposite of the U.S. Waymo/Tesla duopoly.

Third — and worth pausing on — China actually started this. Baidu launched Apollo in July 2017 as an open source autonomous driving platform, explicitly modeled as “the Android of autonomous driving,” with source code on GitHub and roughly 100 partners signed on, including Toyota, Geely, Daimler, BMW, Hyundai, Ford, Nvidia, Bosch, and Intel. The vision was correct. The execution never fully matched it. Baidu let the commercial Apollo Go robotaxi business eclipse the open platform, never moved Apollo into a neutral foundation, and the broader industry never coalesced around it the way the OEMs coalesced around Android. Apollo as an open source platform exists — and is meaningful — but it never became the industry-wide consortium that this post argues for. The original idea is now nearly a decade old and still waiting for a serious champion.

Fourth, an open source AV consortium is still the natural next move — and China is best positioned to lead it. Chinese government policy already favors open source as national strategy. The country has a deep manufacturing base, multiple competing AV stacks, a permissive regulatory environment, and the lowest-cost EVs in the world to put underneath any AV stack. The same logic that produced an open Chinese AI ecosystem applies here: many participants benefit from a shared base layer, the government supports it, and the result is a stack that is “sanction-proof” because the project itself is governed openly under a neutral foundation. Whether this happens through a re-energized Apollo, a new consortium, or government-driven coordination is an open question. But the structural conditions are present, and the precedent — both the Apollo attempt and the broader Chinese open source playbook — is clear. Don’t be surprised if China leads, formally or informally, the first major open source AV consortium of consequence.

Using Google’s Own Playbook Against Google

Waymo is clearly the market leader. The company is a generational success — the only Western entity to have deployed safe, fully driverless commercial service at meaningful scale, with safety data showing 90% fewer serious-injury crashes than human drivers across more than 127 million autonomous miles. They have earned every bit of that lead. But Waymo’s structural position today looks far more like Apple’s in 2008 than Google’s. Apple’s iPhone lead was real, durable, and earned through years of hard engineering. It still got commoditized at the platform layer — by an open source consortium that Google itself led — even as Apple continued to thrive at the premium tier of the market.

So the only real question left is whether the non-Waymo players have the intelligence and the chutzpah to use Google’s own playbook against Google. Think about that for a moment. Google built the most successful Open Source Strategy in corporate history. They ran it against Apple. They ran it against Amazon. They won both times. The exact blueprint is sitting in the public record, taught in MBA classes, and re-validated every year by the success of Android and Kubernetes. The non-Waymo players do not need to out-innovate. They need to collaborate. The question is whether anyone among them — Toyota, VW, Stellantis, Ford, Uber, Amazon, BYD, any of them — has the strategic vision to recognize the opportunity, the conviction to be the first mover, and the chutzpah to do to Google exactly what Google has done to two of the most powerful incumbents in the history of technology. That is the test. That is the entire question.

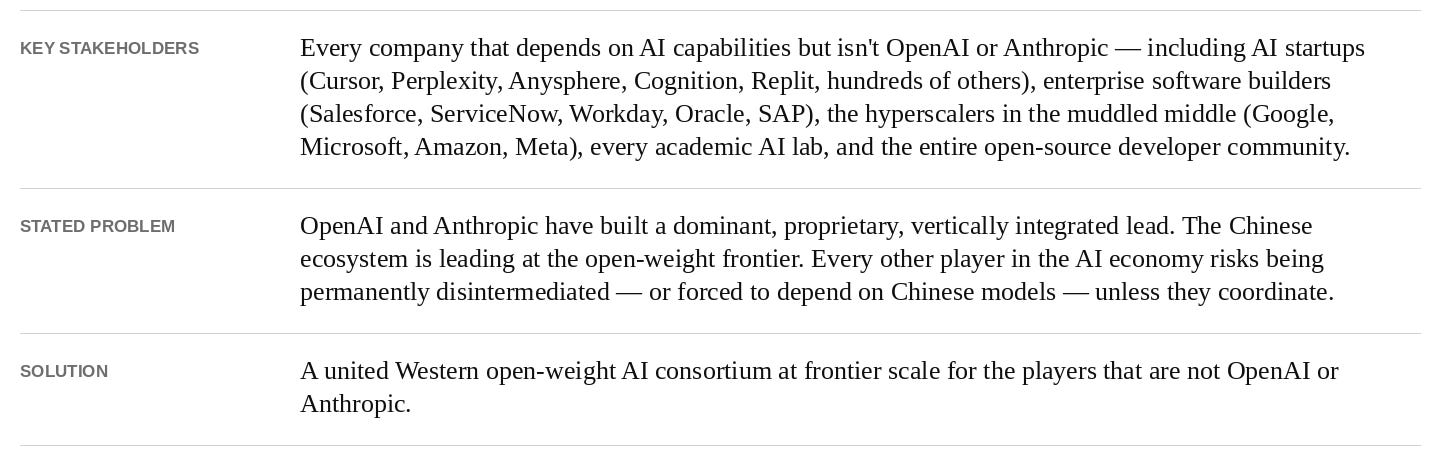

Open Source and AI

The second live example is artificial intelligence — the most discussed topic in technology by a wide margin, and the case where the open-versus-closed question is most contested.

A quick note on terminology before we go further. “Open source” in AI is not quite the same thing as in software. The most consequential open layer in AI right now is open weights — model parameters released for anyone to download, run, and fine-tune, even when the underlying training code and data are not. Open-weight models are what allow startups, researchers, and enterprises to compete without paying rent to a closed-Cathedral vendor. They are what let an entire ecosystem of inference, serving, and tooling emerge in the open. When I refer to the “open ecosystem” in AI, that is mostly what I mean. The structural dynamics — commoditization, supplier-power neutralization, follower’s-weapon strategy — are the same as the software case. The terminology is just a little different.

Why an Open AI Solution Is the Right Answer

The case for an open AI ecosystem maps cleanly onto the case I have been making throughout this post. Without open weights, the AI economy collapses to two or three Cathedral vendors who can dictate the terms on which every other party operates. Open weights solve this in three concrete ways.

First, no lock-in. With open-weight models, startups and enterprises can self-host, fine-tune, switch providers, or fall back to local inference whenever they need to. They are not negotiating API terms with two incumbents who can change pricing or shut off access at will. This is the most basic protection against platform-tax dynamics, and closed weights eliminate it entirely.

Second, real academic participation. AI research depends on access to the most capable models. If frontier capability is locked behind closed APIs, the academic community is reduced to either renting model access at commercial rates or studying second-tier models that nobody actually uses in production. That is not how meaningful AI research can happen at scale. An open-weight frontier is the only structure that lets universities do real work on safety, interpretability, alignment, and capability research.

Third, little tech can still build. Every AI startup, every solo developer, every two-person team building a product on top of AI infrastructure depends on having access to good models at affordable prices. Closed Cathedrals can extract platform tax indefinitely if there is no credible open alternative. An open-weight ecosystem keeps the floor on pricing competitive and gives little tech a fighting chance to build durable businesses without betting their company on the goodwill of two vendors.

The strategic logic is the same as every previous case in this post. AI is not magically different.

The State of Play

Four facts about the current AI landscape are worth getting on the table together.

First, China currently leads at the open-weight frontier. DeepSeek, Alibaba’s Qwen, Moonshot’s Kimi, Zhipu’s GLM, MiniMax, and others. Multiple deep-pocketed players. National-strategy backing across two consecutive Five-Year Plans. Capable, fast-improving, and openly released. This is not contested. Demis Hassabis, the head of Google DeepMind, said it directly in a recent interview at Y Combinator: “a lot of the Chinese models are excellent and they’re currently leading in open source.” Major American companies have noticed. Anysphere built Cursor’s Composer 2 model on Moonshot’s Kimi. Airbnb’s customer service agent runs on Alibaba’s Qwen — Brian Chesky told Bloomberg that Airbnb relies “a lot” on Qwen because it is “very good” and “fast and cheap.”

Second, OpenAI and Anthropic lead at the absolute frontier — and both are closed. Both are American. Both have closed weights. Both have enormous capital advantages. Both are pursuing the most aggressive Cathedral playbook in the industry. The structure rhymes with mobile in 2008, and OpenAI in particular looks a lot like Apple did then.

Third, hyperscaler commitment to open is mixed and muddy. Google has Gemma at the open layer and Gemini at the closed frontier — running both tracks simultaneously. Microsoft is OpenAI’s largest backer but also distributes Llama, Mistral, and other open models on Azure. Amazon — we will come back to this one — has been the most curiously absent. Meta, the most vocal open-weight advocate in the U.S. for years, has clearly pulled back. Llama 4 underperformed at launch in 2025. Llama 4 Behemoth, the planned frontier model, was shelved. Mark Zuckerberg confirmed in July 2025 that Meta would not release superintelligence-capable models openly. Meta Superintelligence Labs released Meta’s first frontier-class closed model — Muse Spark — in April 2026, with weights withheld. Two years ago, Zuckerberg published a manifesto titled “Open Source AI is the Path Forward.” It reads very differently today. Meta is no longer a reliable Western open-frontier player.

Fourth, regulation could close the market instead of increasing competition. This is the most consequential development. The closed-Cathedral incumbents have powerful incentives to use national-security framing and China-hawk anxiety to push regulators toward outlawing open competition — because if you cannot beat the open ecosystem on quality and price, you have to beat it in Washington. The playbook is already visible. On April 29, 2026, Semafor reported that two House committees sent letters to Anysphere and Airbnb demanding information about their use of Chinese open-weight AI models — even though both companies independently chose those models on pure price-performance grounds. The natural endpoint of this trajectory is restricting or banning Chinese open-weight models on national-security grounds, which would conveniently leave only the U.S. closed-Cathedral incumbents standing in the U.S. market. This is the most important fight in technology policy right now, and it is being framed almost entirely on the incumbents’ terms.

Will an Open Leader Emerge in the West?

So the obvious question is: who, if anyone, in the West can play the open-weight role at frontier scale?

Mistral is the closest thing the West has to a serious open-weight contender. The French startup has continued shipping aggressively under Apache 2.0 — Mistral Large 3 in December 2025, a flurry of releases in early 2026, ARR growth from $20 million to $400 million in a year, an $830 million raise to build EU data centers. They are trying. But at 675 billion total parameters, they sit a tier below the absolute frontier.

Google’s Gemma is open at the edge — useful, well-engineered, and downloaded tens of millions of times. But Google has been clear that Gemini at the frontier remains closed. Gemma is committed only at the “nano size” level.

xAI has made some open-weight moves but the strategy is unclear. Meta has stepped away. Apple, Amazon, and Microsoft have nothing meaningful at the open frontier.

The honest assessment is that there is currently no credible Western open frontier player. Mistral is closest, Gemma is partial, and beyond that the field is empty. The structural conditions that would call one into being — closed-Cathedral incumbents extracting rent, a Chinese-led open ecosystem demonstrating that the playbook works — are present and intensifying. The will to organize around an open alternative is not.

And If Not, the Rest of the World Runs on Chinese Models

If a credible Western open frontier player does not emerge, the consequences cascade quickly.

This is the inverse of the early Internet wave. In the 2000s and 2010s, Western companies — Google, Facebook, Amazon, Apple, Microsoft — dominated globally while China carved out its own walled garden. The AI version flips that dynamic on its head. Without a credible Western open frontier player, the only open models capable of running entire economies are made in China. If U.S. policy further restricts Chinese open-weight access on national-security grounds, the U.S. ends up with two or three closed Cathedrals serving the U.S. market — and the rest of the world picks the AI stack that is free, capable, self-hostable, and not embargoed. Europe, Africa, Southeast Asia, Latin America, India, the Middle East. Roughly six billion people. Chinese open models become the global default by 2030, and the United States ends up technologically isolated from the majority of the world’s AI users. We would have done it to ourselves.

Watch what happens to AI infrastructure over the next twenty-four months. And watch Washington just as carefully.

Conclusion

Open source is no longer just how good software gets built. It is how dominant incumbents get neutralized, how trillion-dollar industries shift their power structure, and how the next generation of strategic moats gets dug — by the companies smart enough to dig them in the open. The world’s most sophisticated technology companies have spent fifteen years quietly mastering this. Most of the world is still treating open source as a development philosophy when it has long since become a corporate weapon. That gap in understanding is itself a form of structural disadvantage.

If you run a company in any industry that touches technology, the question is no longer whether to engage with open source. It is whether you are using it strategically — to neutralize a stronger rival, commoditize an expensive input, align an industry around a shared standard, or simply protect your own position in the value chain — and whether your competitors have figured out something you have not.

If you sit in policy or government, the question is whether you understand what is at stake. The closed-Cathedral incumbents will tell you that open source is a national security risk and that protecting American technology requires protecting them. Be very careful which arguments you accept and from whom. Choosing the wrong side of this fight will not just hand the global AI ecosystem to China. It will permanently damage the most generative force in American technology — the open infrastructure that has powered every major software innovation of the last twenty-five years.

If you build technology — at a startup, in a research lab, on a weekend — the question is whether you can still imagine a future where the best tools, the best models, and the best infrastructure remain freely available to you. Because that future is no longer guaranteed. It is one regulatory cycle, one Cathedral consolidation, one Washington miscalculation away from being permanently narrowed.

A new world order in technology is being constructed in real time, and the role open source plays in that order is being decided right now — partly in foundation board rooms, partly in earnings calls, partly in congressional hearings, partly in policy white papers being written by lobbyists for the largest closed AI companies in the world. The companies that understand this will compound their advantages over the next decade. The countries that understand this will lead the global technology landscape. The individuals who understand this will be impossible to outmaneuver.

Make sure you are one of them.